How to Practice for McKinsey Solve in 2026 (Simulation-Based Prep Guide)

How to use AI simulation to prepare for McKinsey Solve — practice timelines, scoring benchmarks, and mistakes to avoid.

New to the assessment? Start with our McKinsey Solve 2026 overview — format, all 3 games, scoring, and pass rates. This guide focuses on how to practice once you know the basics.

Why Simulation Is the Only Preparation That Matches the Test

McKinsey's Problem Solving Game — known internally as "Solve" — eliminates over 60% of candidates before they ever reach a case interview. The assessment is procedurally generated, strictly timed, and designed to be resistant to memorization. No two candidates receive the same test.

That's exactly why McKinsey Solve simulation practice has become the single most effective preparation method: it mirrors real test conditions with AI-generated scenarios you've never seen before. Unlike static PDF guides or YouTube walkthroughs, a simulator trains your decision-making process rather than your memory — which is precisely what McKinsey is evaluating.

This guide covers everything you need to know about using simulation to prepare for McKinsey Solve 2026, from how the games work to how many sessions you need to the mistakes that cost candidates their offers.

What McKinsey Solve Simulation Is

A McKinsey Solve simulation is an AI-powered practice environment that replicates the actual Problem Solving Game. The simulator generates unique scenarios every session — different species data, different environmental constraints, different datasets — just like the real assessment does.

This matters because memorizing answers from a prep guide won't help when you're facing a completely new ecosystem or dataset on test day. The McKinsey Solve 2026 assessment consists of two core scored games — Sea Wolf (ecosystem building) and Red Rock (data interpretation) — with some candidates also receiving a third module called the Sustainable Future Lab (behavioral decision-making). A simulation platform lets you practice the analytical games under realistic ~35-minute time pressure with instant feedback on your performance.

The Games You Need to Master

Sea Wolf: Ecosystem Building

The Sea Wolf Game presents you with a marine ecosystem where you must select species that form a sustainable food chain. You're given a set of organisms — producers, primary consumers, and predators — along with data about their caloric needs, caloric output, and depth/temperature preferences.

The challenge is selecting the right combination of species that can coexist without any organism starving or being outcompeted. With dozens of possible species and strict environmental constraints, there are thousands of possible combinations but only a handful of optimal ones. McKinsey Solve simulation practice for Sea Wolf generates fresh species data each session, forcing you to apply the underlying optimization logic rather than recall memorized answers.

The Sea Wolf Solver complements simulation practice by helping you understand what mathematically optimal selections look like. The solver teaches you which variables drive the best outcomes; the simulation trains you to find those outcomes under pressure.

Red Rock: Data Interpretation

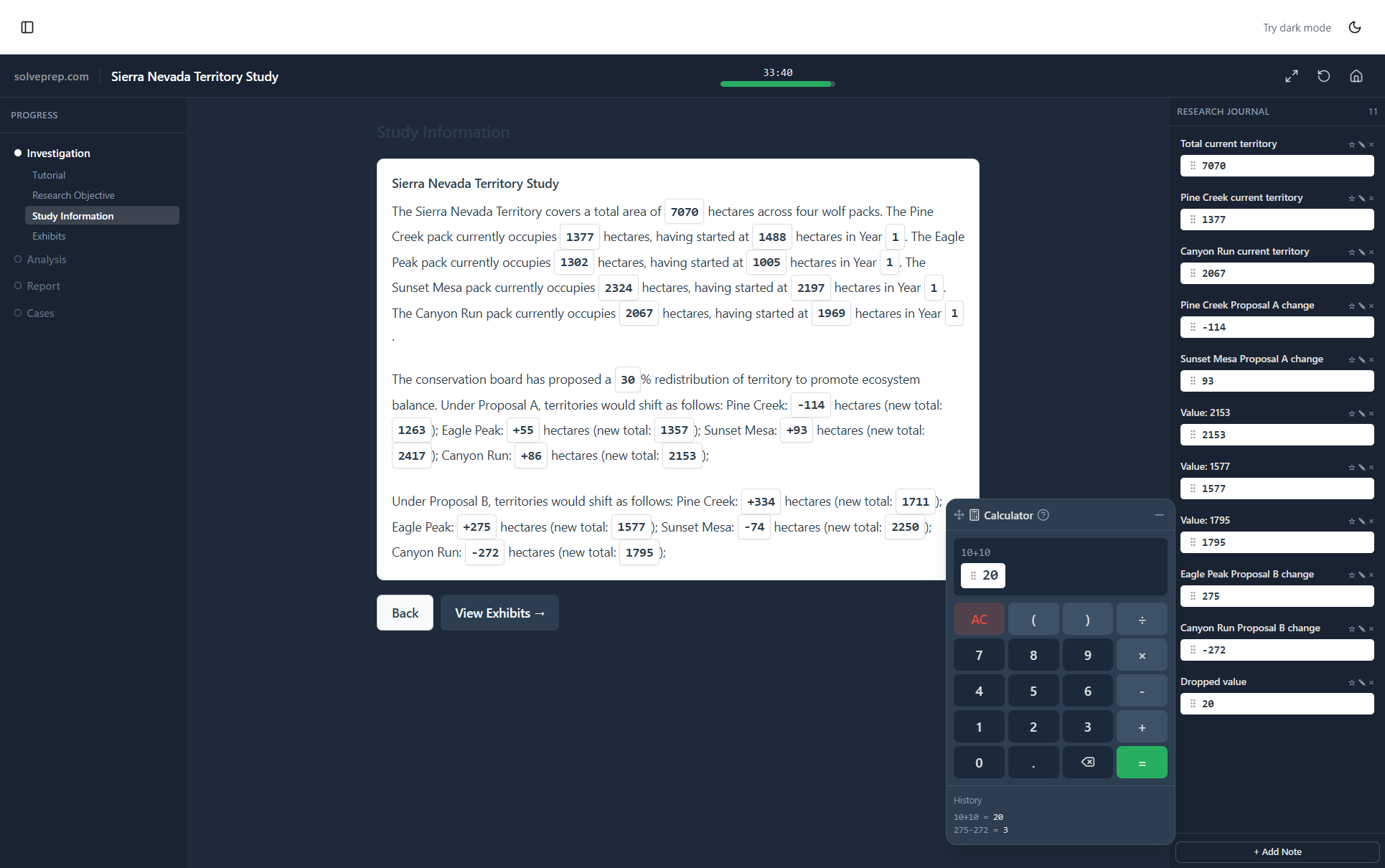

The Red Rock Study tests your ability to analyze geological data through charts, scatter plots, and maps. You're asked to identify patterns, draw conclusions from datasets, and answer questions about relationships between variables — all under time pressure.

Red Rock simulation practice is critical because the data visualizations change every time. You need to develop rapid chart-reading skills and pattern recognition that transfer to any dataset McKinsey presents. The data formats, chart types, and question structures in simulation mirror what you'll encounter on test day.

Sustainable Future Lab: Behavioral Decision-Making

Starting in early 2026, some candidates — particularly those applying to offices in Germany, the Middle East, and other select regions — are encountering a third module called the Sustainable Future Lab. If your test invitation indicates an 85-minute window instead of the standard 65 minutes, you'll face this module in addition to Sea Wolf and Red Rock.

The Sustainable Future Lab is fundamentally different from the other two games. Rather than testing analytical optimization or data interpretation, it evaluates behavioral judgment — how you navigate team dynamics, stakeholder conflicts, and interpersonal decisions in consulting-style scenarios. Think of it as a situational judgment test embedded within the Solve assessment.

This module is harder to simulate with AI-generated scenarios because the "right" answers depend on judgment rather than calculation. However, understanding the format and the type of reasoning McKinsey rewards is still a significant advantage over going in blind. Candidates who know that McKinsey values collaborative problem-solving, structured communication, and consistency across responses are better positioned to perform well. We cover preparation strategies in detail in our Sustainable Future Lab guide.

Why AI Simulation Beats Static Practice Materials

Most candidates prepare using PDF guides, blog posts, or recorded walkthroughs. These resources are useful for understanding game mechanics, but they have a fundamental limitation: they show you the same scenarios every time. Once you've seen the solutions, their practice value drops to zero.

AI-powered simulation is categorically more effective for five reasons.

Unique scenarios every session. The AI generates new species data, new food chains, new charts, and new datasets. You can't memorize your way through — you have to develop the actual skill.

Real-time feedback. After each attempt, you see exactly where your selections were suboptimal and why. Candidates consistently report that this accelerates learning by 3–5x compared to reviewing static answer keys where you're never sure whether your approach was correct or just acceptable.

Score tracking over time. Simulators track your accuracy, speed, and improvement trajectory. You know exactly when you're ready for the real assessment rather than guessing based on how confident you feel.

Time pressure conditioning. Practicing under the same ~35-minute constraints as the real test builds the composure and pacing you need on assessment day. Manual timers don't replicate the cognitive pressure of a real countdown — the knowledge that every second matters changes how your brain processes information.

Pattern recognition development. After 10–15 simulation sessions, candidates report that ecosystem patterns and data relationships become intuitive rather than analytical. That shift from conscious analysis to instinctive recognition is the exact skill McKinsey is testing for.

Static materials teach you what the games are. Simulation teaches you how to perform in them.

How to Practice Effectively

Having access to a McKinsey Solve simulation platform is only half the equation. How you practice matters as much as how often. Here's the approach that consistently produces the highest pass rates.

Start Slow and Focus on Accuracy

Your first 3–4 sessions should ignore the timer entirely. Focus on understanding why certain species combinations work and others don't, why specific data patterns lead to correct conclusions, and where the common traps are. Get the logic right before adding speed. Candidates who start with timed sessions before understanding the underlying mechanics develop bad habits that are harder to correct later.

Analyze Every Feedback Report

After each simulation, spend 5–10 minutes reviewing what you got wrong and why. Look for patterns in your mistakes — are you consistently misjudging caloric requirements? Overlooking depth constraints? Missing data relationships in scatter plots? The feedback review is where most of the actual learning happens. Rushing into the next session without reflecting on the last one wastes a significant portion of your practice investment.

Track Your Scores Across Sessions

Plot your accuracy and completion time session over session. You should see a clear upward trend in both metrics. If your scores plateau after several sessions, that's a signal to change your approach — try a different decision framework, focus on a specific weakness, or increase difficulty — rather than grinding more sessions with the same strategy.

Isolate Your Weak Game

If Sea Wolf is consistently weaker than Red Rock (or vice versa), dedicate extra sessions to your weaker game. Both games carry roughly equal weight in McKinsey's composite scoring, so a strong Red Rock performance can't fully compensate for a weak Sea Wolf score. Use the Sea Wolf simulation or Red Rock simulation individually to target specific weaknesses.

Prepare for the Sustainable Future Lab Separately

If your test email indicates an 85-minute assessment, set aside dedicated preparation time for the Sustainable Future Lab. Since this module tests behavioral judgment rather than analytical skills, the preparation approach is different: review situational judgment test frameworks, practice identifying the collaborative and structured response in ambiguous team scenarios, and read through our Sustainable Future Lab breakdown to understand the type of decisions McKinsey rewards. You won't need 15 simulation sessions for this module — but going in unprepared is a real risk.

Simulate Exact Test Conditions

For your final 3–4 sessions before the real assessment, replicate exact test conditions: quiet room, no notes, strict timer, no breaks between games. This builds the mental stamina McKinsey is testing and ensures your first experience with sustained, high-pressure decision-making isn't on the day that counts.

What Score Do You Need to Pass?

McKinsey doesn't publish exact score thresholds, but candidate outcome data suggests the following benchmarks.

Sea Wolf: You need to select a near-optimal or optimal ecosystem. Candidates who select species combinations within 90%+ of the mathematical optimum consistently pass. Suboptimal selections that still form a viable ecosystem may pass depending on the overall candidate pool strength, but you shouldn't plan on clearing the bar with a "good enough" ecosystem.

Red Rock: Accuracy on data interpretation questions needs to be roughly 70–80%+ with reasonable completion speed. Getting questions right at a measured pace scores better than guessing quickly — accuracy is weighted more heavily than speed across both games.

Sustainable Future Lab: Scoring mechanics for this module are still emerging, but based on candidate reports and the situational judgment format, consistency across responses and alignment with collaborative, structured decision-making appear to be the key scoring dimensions. There's no "speed bonus" here — thoughtful, values-consistent answers matter more than fast ones.

Your performance across all assigned games is evaluated holistically. Excelling at one can partially compensate for a weaker performance on another, but every game contributes to your composite percentile. If you're assigned three games instead of two, each one carries weight — making balanced preparation across all modules even more important. Simulation practice helps you benchmark against these thresholds — after 8–10 sessions, you should be consistently hitting optimal or near-optimal scores before attempting the real assessment.

Common Mistakes That Cost Candidates Their Offers

After analyzing feedback from hundreds of McKinsey Solve candidates, these are the most costly preparation errors.

Over-relying on guides without practicing. Reading about how the games work is not the same as playing them. Candidates who only read guides pass at roughly half the rate of those who combine guides with simulation practice. Understanding the mechanics and performing under pressure are fundamentally different skills.

Ignoring Red Rock. Most prep content focuses on Sea Wolf because it's the more novel game. But Red Rock carries equal weight in scoring, and many candidates lose critical points there due to lack of chart-reading practice. Don't assume your existing analytical skills will transfer without practice in McKinsey's specific format.

Not preparing for the Sustainable Future Lab. Candidates who receive the 85-minute format and walk into the Sustainable Future Lab completely blind are at a real disadvantage. The behavioral judgment format is unfamiliar to most analytical candidates, and the tendency to overthink or apply analytical frameworks to interpersonal scenarios often backfires. Even 1–2 hours of focused preparation on how situational judgment tests work and what McKinsey values in team settings can meaningfully improve your performance.

Never practicing under time pressure. The real assessment is strictly timed at ~35 minutes per game. Candidates who practice without a timer often freeze or rush when they encounter the actual countdown. Time pressure changes how your brain processes information — you need to experience that shift in practice, not for the first time on test day.

Starting too late. Preparation should begin 2–3 weeks before your scheduled test date. Starting three days before — or the night before — doesn't give your pattern recognition enough time to develop. The cognitive skills Solve measures take multiple sessions to build, and they can't be crammed.

Practicing the same scenarios repeatedly. If you're using a resource with fixed scenarios, you're training your memory, not your skills. After 2–3 repetitions of the same scenario, you're recognizing the answer rather than solving the problem. This creates false confidence that evaporates on test day when every scenario is new.

How Long Should You Practice?

Based on candidate outcomes, here's the recommended timeline.

Minimum effective preparation: 7–10 simulation sessions over 1–2 weeks. This is enough to develop basic pattern recognition and time management skills, though you may still have gaps in edge cases. If you're assigned the Sustainable Future Lab, add 1–2 hours of dedicated behavioral prep on top of your simulation schedule.

Optimal preparation: 15–20 sessions over 2–3 weeks with daily practice. This allows enough repetition for pattern recognition to become instinctive while giving you time to identify and address specific weaknesses. For candidates facing the 85-minute format, include Sustainable Future Lab review in your final week.

Diminishing returns: Beyond 25 sessions, improvement typically plateaus unless you're specifically targeting identified weak areas. At that point, adding more sessions of general practice produces less value than focused work on your lowest-scoring dimensions.

Each session takes 20–30 minutes including feedback review. That's a total time investment of roughly 5–10 hours for optimal preparation — a small commitment for an assessment that determines whether you get a McKinsey interview.

You're ready for the real assessment when you can consistently score in the top tier across 3 consecutive simulation sessions under timed conditions.

Simulation vs. Other Preparation Methods

Method | Best For | Limitation | Time Investment |

|---|---|---|---|

PDF guides / eBooks | Understanding mechanics and strategies | Fixed examples, no feedback | 1–2 hours |

YouTube walkthroughs | Visual learners seeing games for the first time | Passive learning, no interactivity | 30–60 minutes |

Peer practice groups | Motivation and accountability | No access to realistic scenarios | Varies |

AI-powered simulation | Developing actual test-day skills for Sea Wolf & Red Rock | Requires active engagement | 5–10 hours total |

SFL-specific prep | Understanding the behavioral module format | Limited simulation available; judgment-based | 1–2 hours |

The optimal strategy combines a guide for foundational understanding with simulation practice for skill development. This combination produces pass rates roughly 2x higher than either method alone. For candidates facing the Sustainable Future Lab, adding targeted behavioral prep ensures you're covered across all modules.

Frequently Asked Questions

Is simulation practice allowed by McKinsey?

Yes. McKinsey does not restrict how candidates prepare for the Problem Solving Game. Using practice simulators, guides, or any other preparation materials is entirely permitted. McKinsey's own website encourages candidates to familiarize themselves with the assessment format before taking it.

How realistic are AI-generated simulation scenarios?

Modern McKinsey Solve simulators generate scenarios that closely mirror the actual assessment's complexity, data formats, and constraint types. The species data in Sea Wolf simulations uses the same caloric and environmental parameters as the real game. Red Rock simulations replicate the same chart types — scatter plots, bar charts, maps — with comparable data density.

How many simulation sessions should I complete?

We recommend 15–20 sessions over 2–3 weeks for optimal preparation. Most candidates see significant improvement after 5–7 sessions and reach their performance ceiling around session 20. Quality of practice — reviewing feedback, analyzing mistakes, targeting weaknesses — matters more than raw session count.

What's the difference between the Sea Wolf Solver and the simulator?

The Sea Wolf Solver helps you understand optimal species selection logic by calculating mathematically best combinations. The simulator is a practice platform for building your skills under realistic time pressure. They serve complementary purposes — the solver calibrates your understanding of what "optimal" looks like, while the simulator trains you to find optimal solutions quickly under test conditions.

When should I start practicing?

Begin simulation practice as soon as you receive confirmation that McKinsey's Problem Solving Game is part of your application process. Ideally, start 2–3 weeks before your scheduled assessment date. This gives you enough time for 15–20 practice sessions without cramming, and allows the pattern recognition skills to develop gradually rather than under last-minute pressure.

Can I practice on a tablet or mobile device?

The simulator runs in your web browser with no downloads required and works across devices. However, practice on the same device type you'll use for the real assessment — typically a laptop or desktop. The screen size and input method affect how you interact with data visualizations, and you want that experience to be familiar on test day.

How do I prepare for the Sustainable Future Lab?

The Sustainable Future Lab requires a different preparation approach than Sea Wolf or Red Rock. Since it tests behavioral judgment rather than analytical skills, focus on understanding situational judgment test formats, McKinsey's consulting culture and values, and how to identify the most collaborative and structured response in ambiguous team scenarios. Check your test invitation email — if it indicates 85 minutes rather than 65, you should expect this module and dedicate preparation time to it.

Build the decision-making skills McKinsey Solve actually tests. The Sea Wolf Solver helps you master optimal ecosystem logic, and the McKinsey Solve Simulation gives you unlimited timed practice with AI-generated scenarios. Start your preparation today.